Proof of what's possible.

Every project below demonstrates a specific AI capability applied to a real operational problem. Each includes the challenge, our approach, the architecture, and measurable results.

Internal Tooling

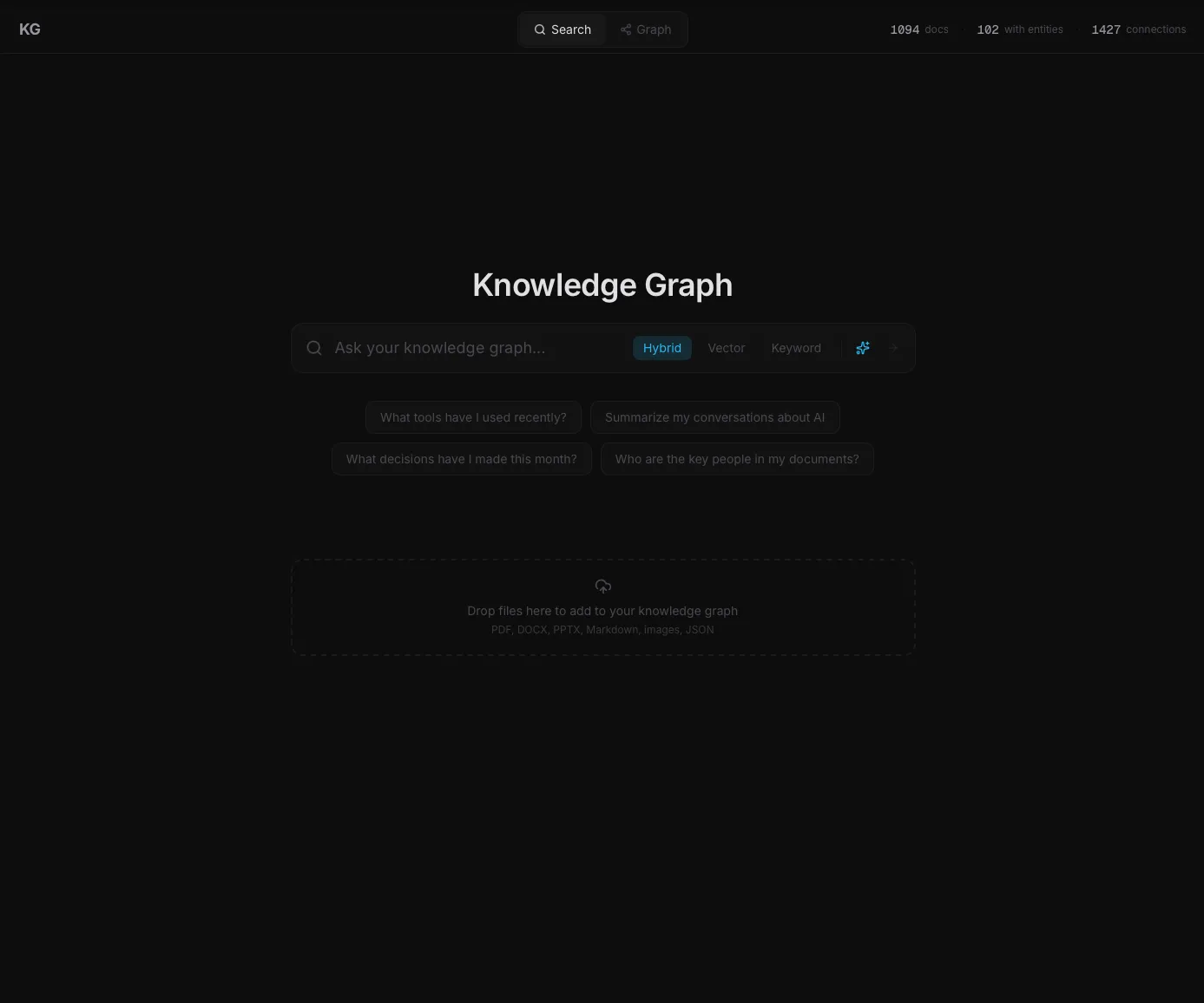

Building a Private Knowledge Graph That Actually Answers Questions

A local-first memory system that ingests your whole document pile, maps the people and companies inside it, and answers questions with citations back to the source files.

1,400+

Documents ingested and searchable locally

Read full case study →AI Proof of Concept

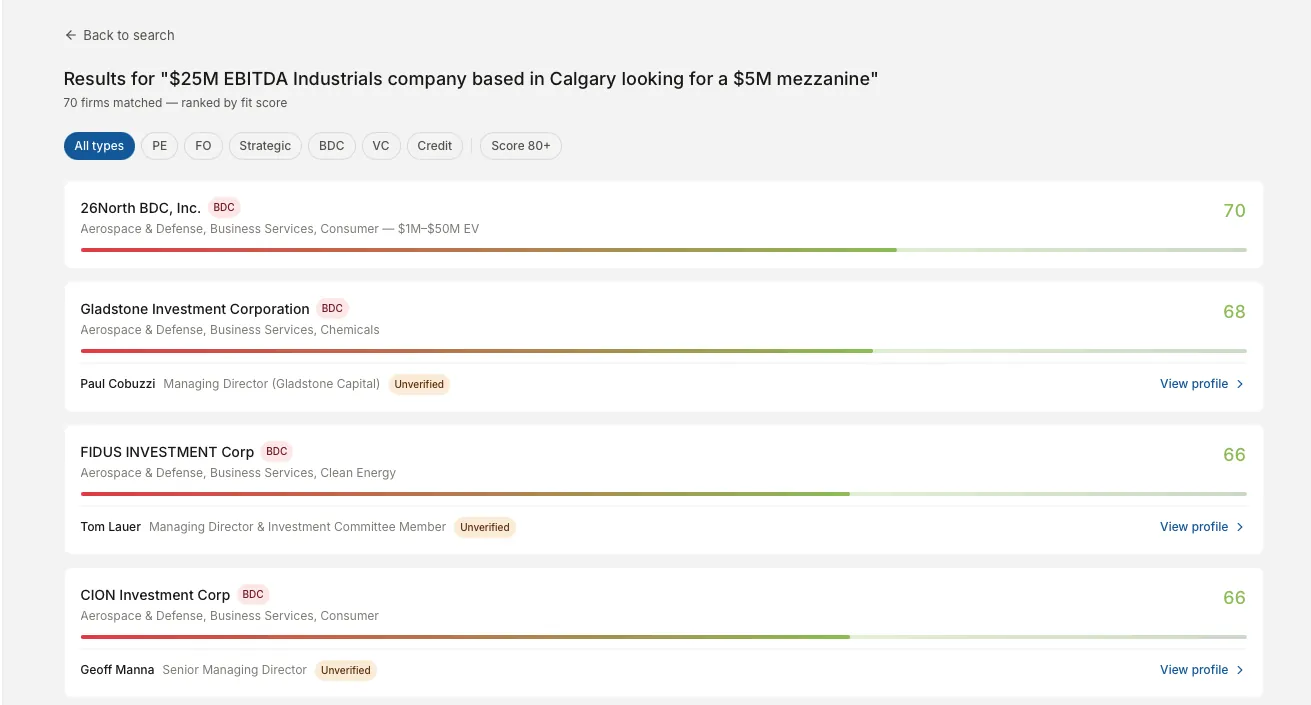

Automated Buy-Side Intelligence for M&A Deal Sourcing

A data platform that ingests public filings and web sources, resolves them into a unified entity database, and matches firms against deal parameters with a 1 to 100 fit score.

3,190

Deduplicated firms from 8,000+ raw entities

Read full case study →Functional Prototype

Centralized Intelligence Platform for Venture Capital

A multi-agent platform that automates deal sourcing, company research, investment thesis generation, and founder outreach from a single dashboard.

5-7 min

Investment thesis generation, down from 4-8 hours

Read full case study →Internal Tooling

Building a Private Knowledge Graph That Actually Answers Questions

The Challenge

Knowledge workers build up a mountain of documents over time. Pitch decks, reports, emails, meeting notes, spreadsheets, screenshots. After a couple of years, there are thousands of files. Folders stop working. Search misses anything that was worded differently than you remember. The information you need exists somewhere in the pile, but you cannot find it in the thirty seconds before a call.

Off-the-shelf tools do not fix this. Notion and Claude Projects do not automatically pull in new files from your inbox or Drive. Enterprise tools like Glean cost thousands per seat and require a vendor to customize. ChatGPT with file uploads forgets everything the moment you close the chat.

What was needed was a private memory system that runs on your own machine, ingests your whole document pile automatically, and lets you ask questions that pull from across all of it.

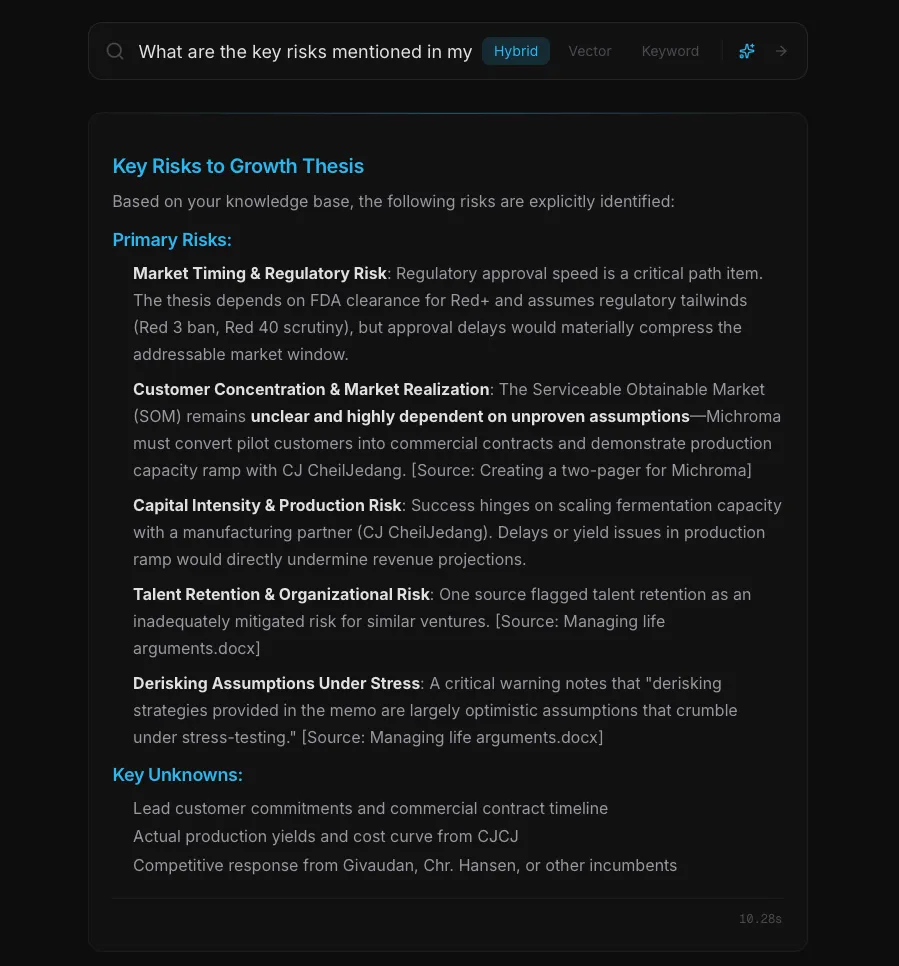

What We Built

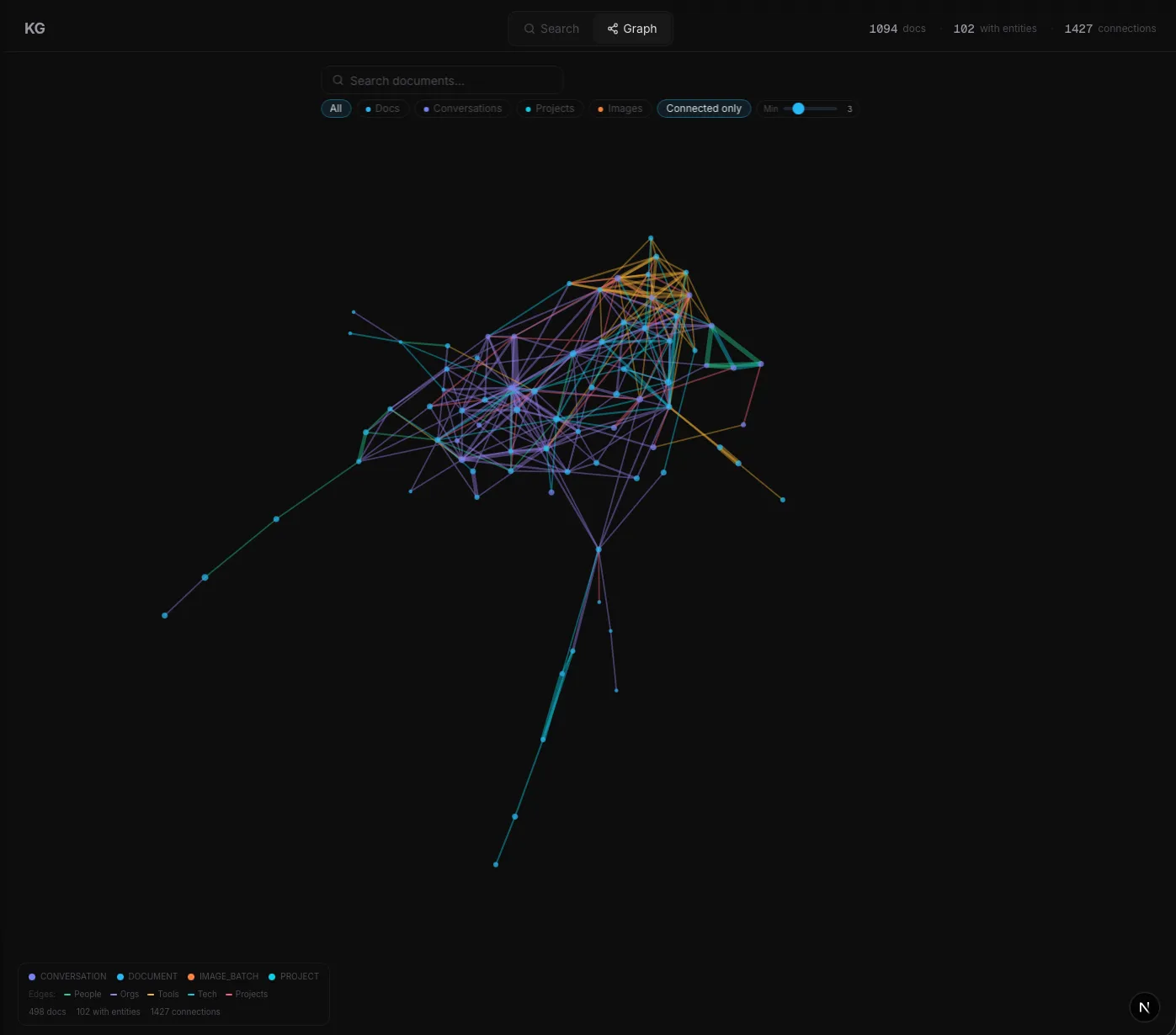

A local system that watches a folder, pulls in every new document automatically, and organizes the contents into a searchable memory. You ask a question in plain language and get an answer that draws from across your entire document pile, with links back to every source it used.

Every document is scanned for the important stuff: the people, companies, tools, and projects it mentions. Those get organized into a graph so you can see at a glance that three different documents all reference the same person, or that five projects all depend on the same vendor.

When you ask a question, the system searches two ways at once. It searches for documents that match the meaning of your question, and it searches for documents that contain the exact words. Then it combines both results and gives you an answer with links back to the source documents so you can verify what it said.

Everything runs locally. No data leaves the machine except for the AI calls that read and summarize text, and those never touch the raw files during search. The system handles plain-language questions about risks, decisions, people, or any topic that appears across the document pile, and answers with citations attached to every claim.

The same approach works for any business drowning in documents: staffing agencies, insurance brokerages, and marketing agencies all deal with the same problem at different scales.

Results

1,400+

Documents ingested and searchable

4,700+

People, companies, and projects extracted into the graph

7,000+

Connections mapped between documents

~$0.02

Cost per document to extract entities

< 1 sec

Typical query response time

100%

Source attribution on every answer

AI Proof of Concept

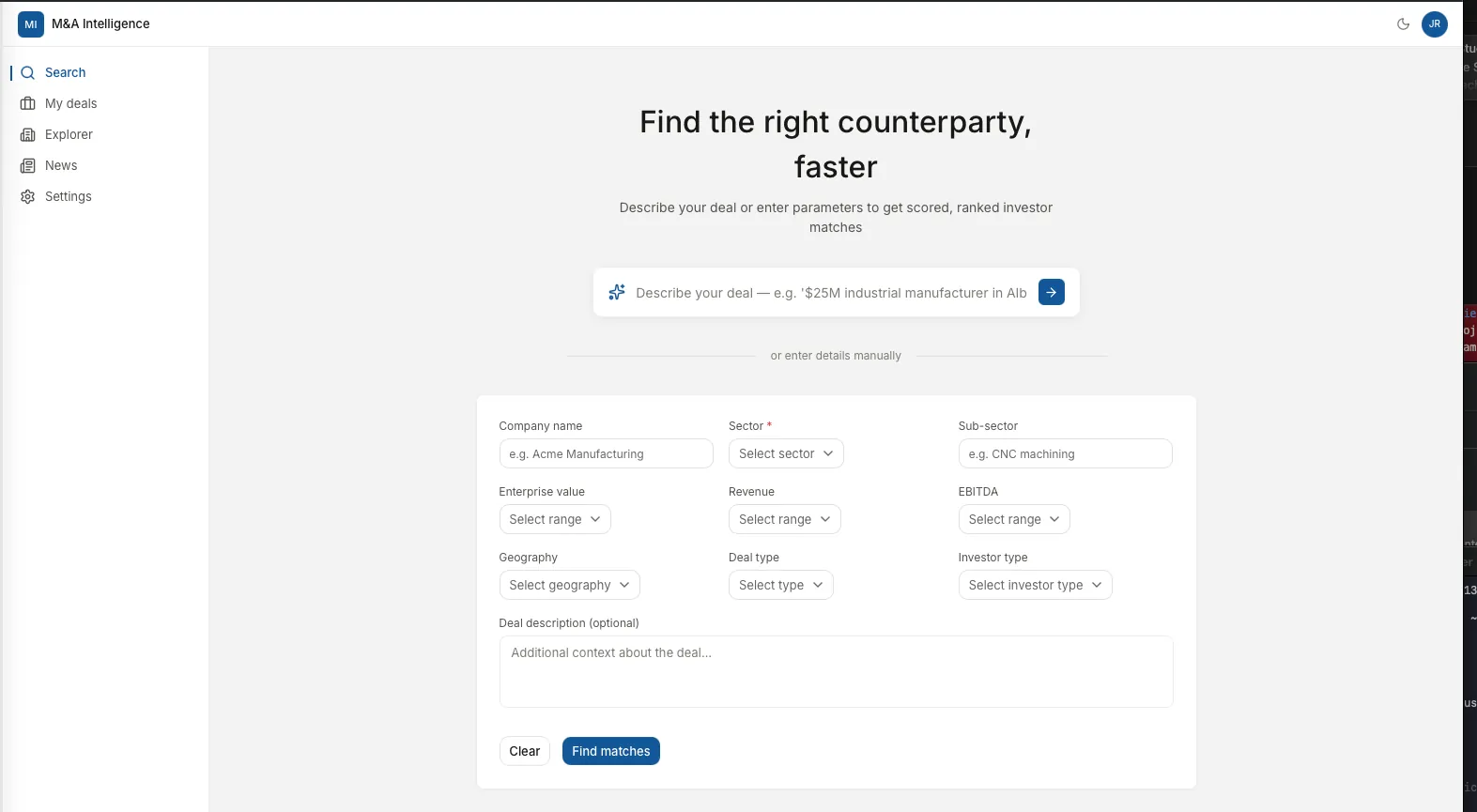

Automated Buy-Side Intelligence for M&A Deal Sourcing

The Challenge

Investment bankers running sell-side M&A engagements face a persistent operational bottleneck: identifying which buy-side firms are actively deploying capital in a specific sector, size range, and geography. The traditional approach relies on personal relationships, outdated spreadsheets, and expensive subscription databases that report self-declared interest rather than actual capital deployment behavior.

A banker placing a $25M industrials company might spend 15 to 20 hours manually researching potential acquirers, cross-referencing databases, and cold-calling contacts, only to produce a buyer list that misses active funds and includes firms that have shifted their mandates since the data was last updated.

What We Built

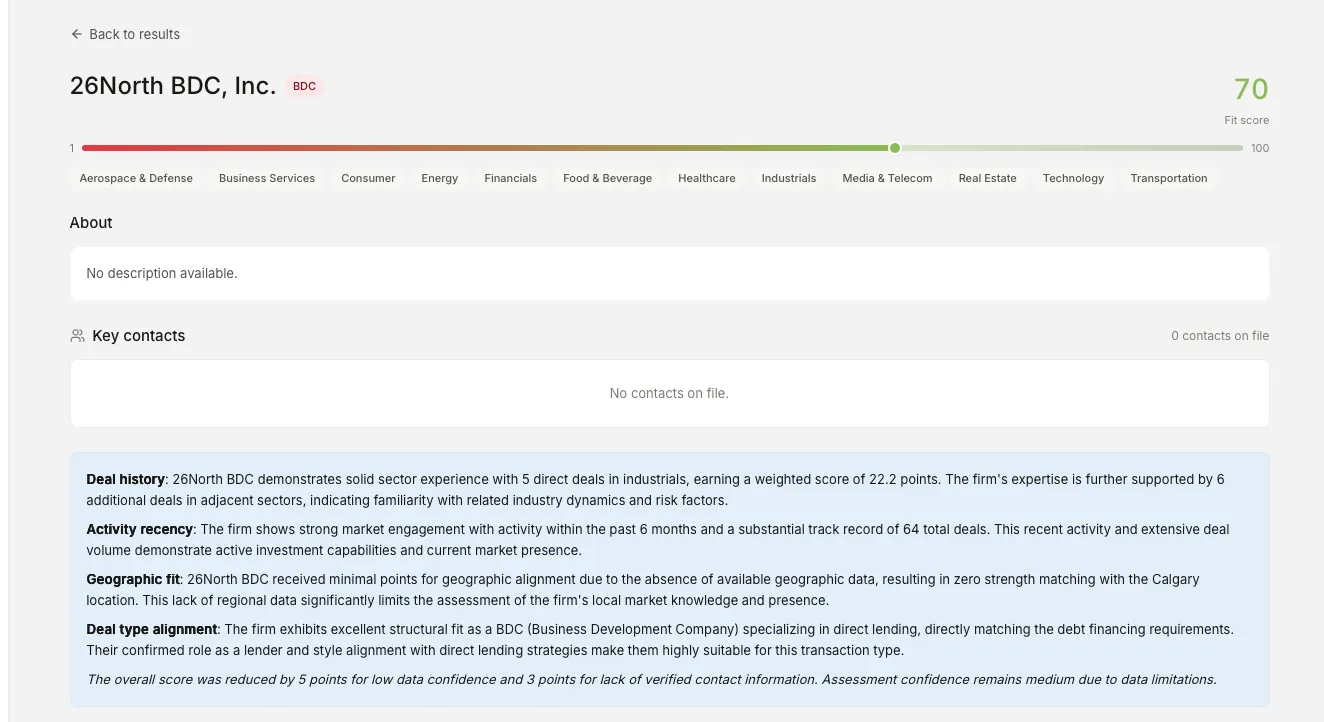

A fully automated data platform that ingests public filings and web sources, resolves them into a unified entity database, enriches each firm with person-level intelligence, and matches them against deal parameters with a 1 to 100 fit score.

The system pulls from 14 distinct data sources through purpose-built scrapers: SEC Form D filings, Form ADV registrations, 13F institutional holdings, BDC quarterly reports, law firm deal tombstones across 67 configured firms, press release feeds with LLM-powered entity extraction, and PE portfolio pages.

A three-pass entity resolution engine handles the core challenge: thousands of entity names with inconsistent formatting, abbreviations, and fund vehicle variations. Deterministic matching runs first, followed by fuzzy matching at a 97% threshold, then embedding-based matching using 768-dimensional vectors for semantically similar but lexically different names. A seven-pass fund grouper then reduces resolved entities into canonical parent firms.

The scoring engine pre-filters candidates using type synonyms and eligibility flags, then scores each on four equally weighted components: deal history, activity recency, geographic fit, and deal type alignment. Scoring completes in under 50 milliseconds for 200 candidates. For a plain-language overview of the retrieval techniques that underpin the unified entity database, see our guide to how RAG searches your own data instead of guessing.

Results

3,190

Deduplicated parent firms from 8,000+ raw entities

12,175

Investment professionals indexed with role classification

8,983

Investment transactions tracked across sources

< 50ms

Scoring engine response time for 200 candidates

14

Distinct data sources integrated through custom scrapers

27

Sequential pipeline stages, fully automated

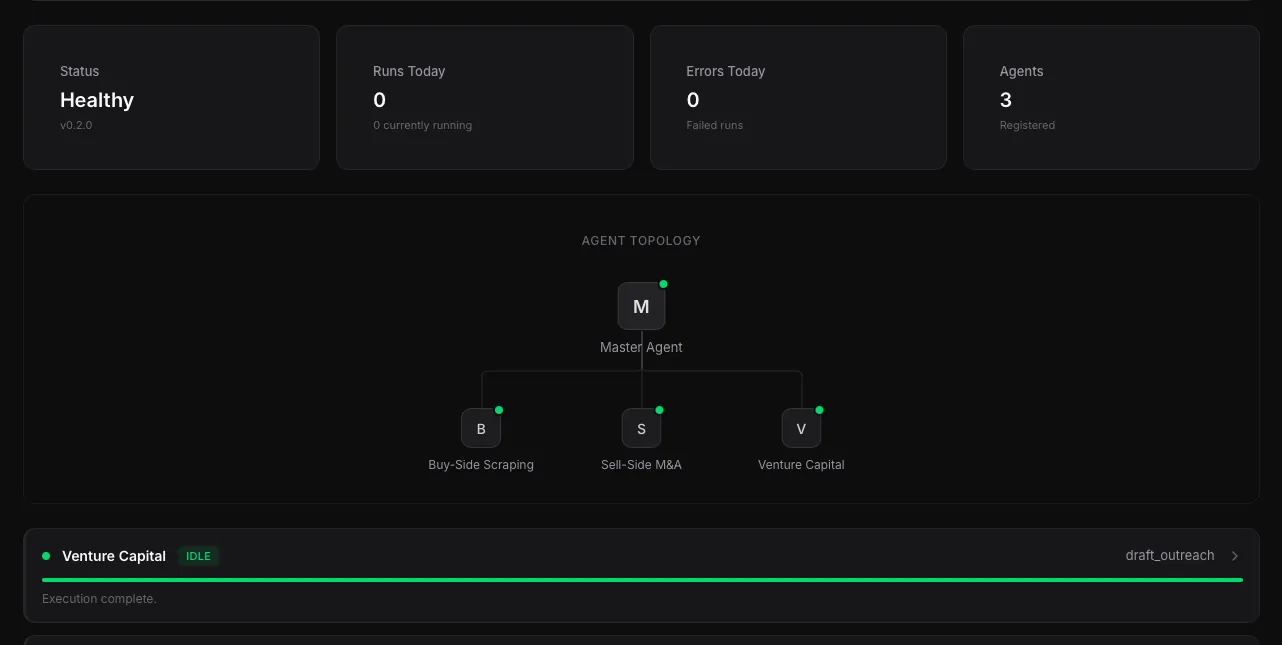

Functional Prototype

Centralized Intelligence Platform for Venture Capital

The Challenge

Early-stage venture capital funds operate across a patchwork of disconnected tools. Deal sourcing lives in spreadsheets and bookmarked portfolio pages. Company research happens across dozens of browser tabs. Investment theses are written from scratch in Word documents. Founder outreach is managed through individual email threads.

For a lean fund team, this fragmentation creates compounding problems. Sourcing coverage is limited by human bandwidth. The research-to-decision cycle is slow, with 4 to 8 hours of manual web research per company. And institutional knowledge is lost between team members because it lives in individual files rather than a shared, searchable system.

What We Built

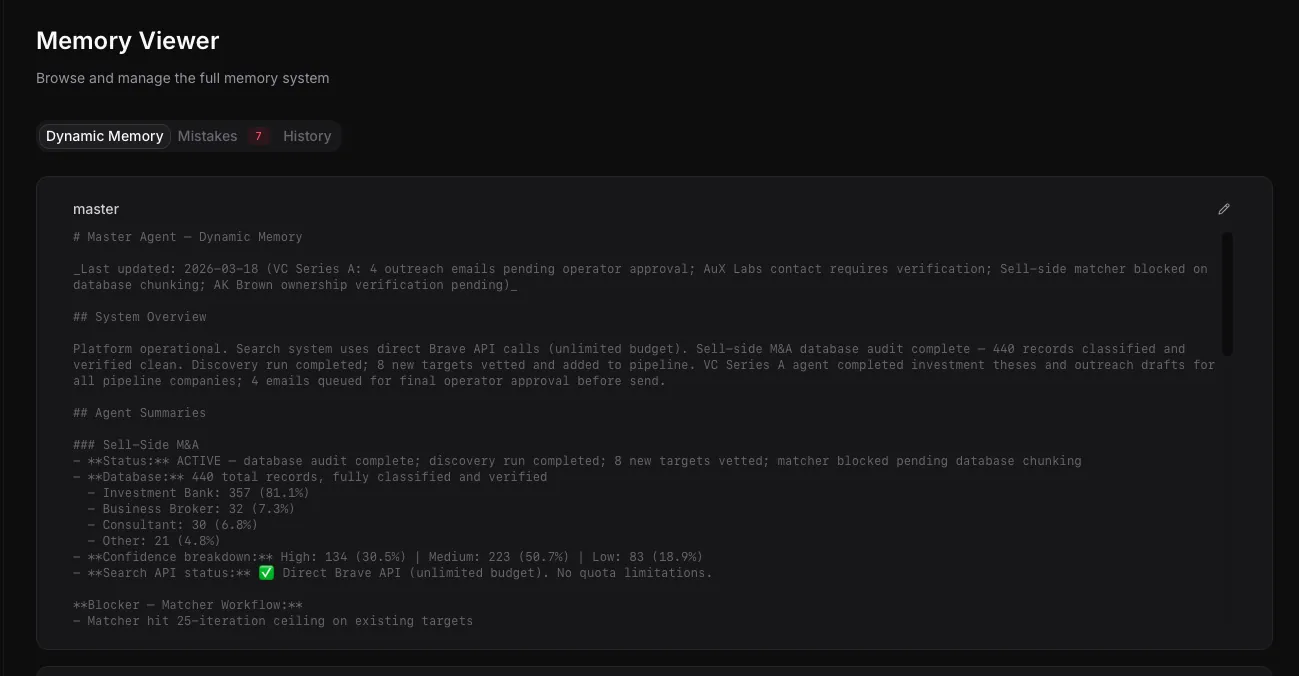

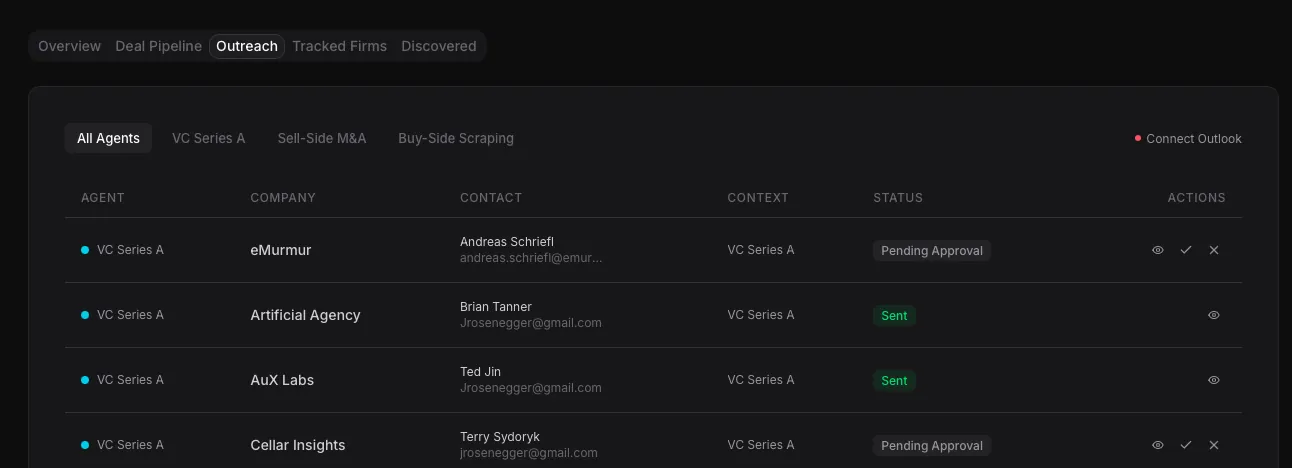

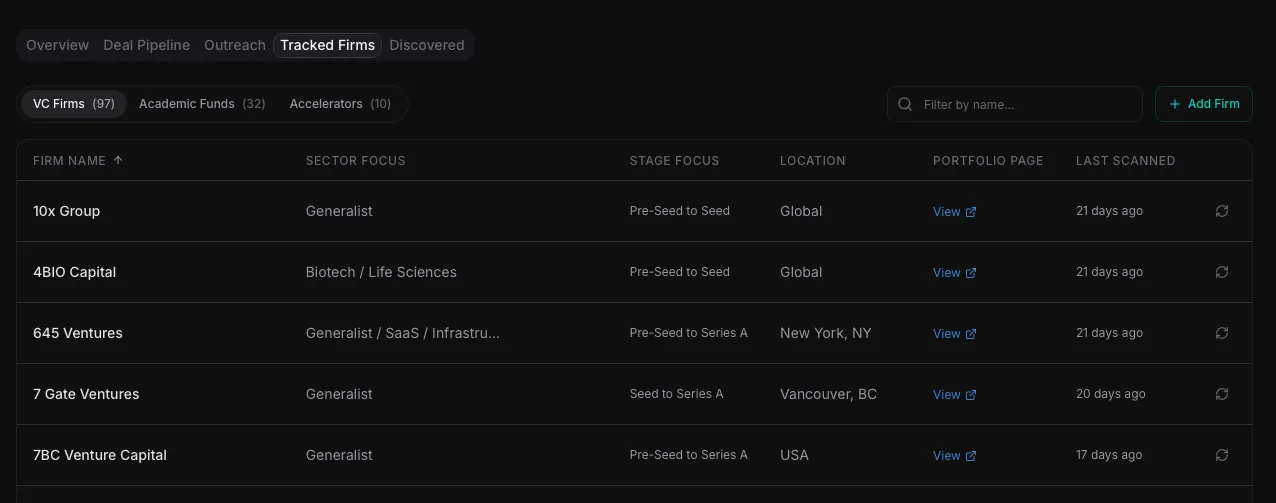

A locally-hosted multi-agent orchestration platform that automates the end-to-end venture capital deal sourcing workflow. The system uses specialized AI agents, each scoped to a specific domain with its own tools, memory, and reasoning capabilities, coordinated by a master agent through a web-based dashboard.

The platform has five integrated components. A Portfolio Intelligence Scanner that monitors 138 VC firms and has discovered over 800 portfolio companies through automated extraction. An Automated Research Engine that dispatches 27 specialized tools to evaluate a company in 5 to 7 minutes, including parallel data gathering, sector-adapted traction research, rigorous market sizing, competitive landscape mapping, and automated fact-checking. An Investment Thesis Generator that synthesizes research into professional 11-section documents matching institutional quality standards. An Outreach Management System with human-in-the-loop approval that ensures no email sends without explicit sign-off. And a Pipeline Dashboard that serves as the operational center for the entire workflow.

Each component feeds directly into the next: portfolio scans populate discovered companies, evaluations produce theses, theses inform outreach decisions, and all activity is tracked in a persistent, auditable record. The platform uses intelligent model routing across tiers for cost control; our guide on how to choose the right AI model for your business covers the decision framework, and our transparent breakdown of AI costs explains the economics.

Results

138

VC firms monitored automatically, up from 20-30 manual

800+

Portfolio companies discovered through automated scanning

5-7 min

Time to produce investment thesis, down from 4-8 hours

$0.60

Average cost per thesis vs. ~$200 in analyst time

6

Disconnected tools consolidated into one platform

100%

Fact-check rate on every generated thesis